:max_bytes(150000):strip_icc()/df-58588dcb3df78ce2c3203706.jpg)

In this case, we start with N DF, spend 1 DF on the intercept, and p DF on the slopes, leaving us with (N - p - 1) DF to estimate uncertainty. With multiple regression, we have p ‘slope’ parameters and a sample of N. If we have N points, then we use 2 of them to fit a line, and the remaining N-2 points represent random noise. A line can always be fit through two points.

With linear regression, we also have a nice geometric interpretation of DF.

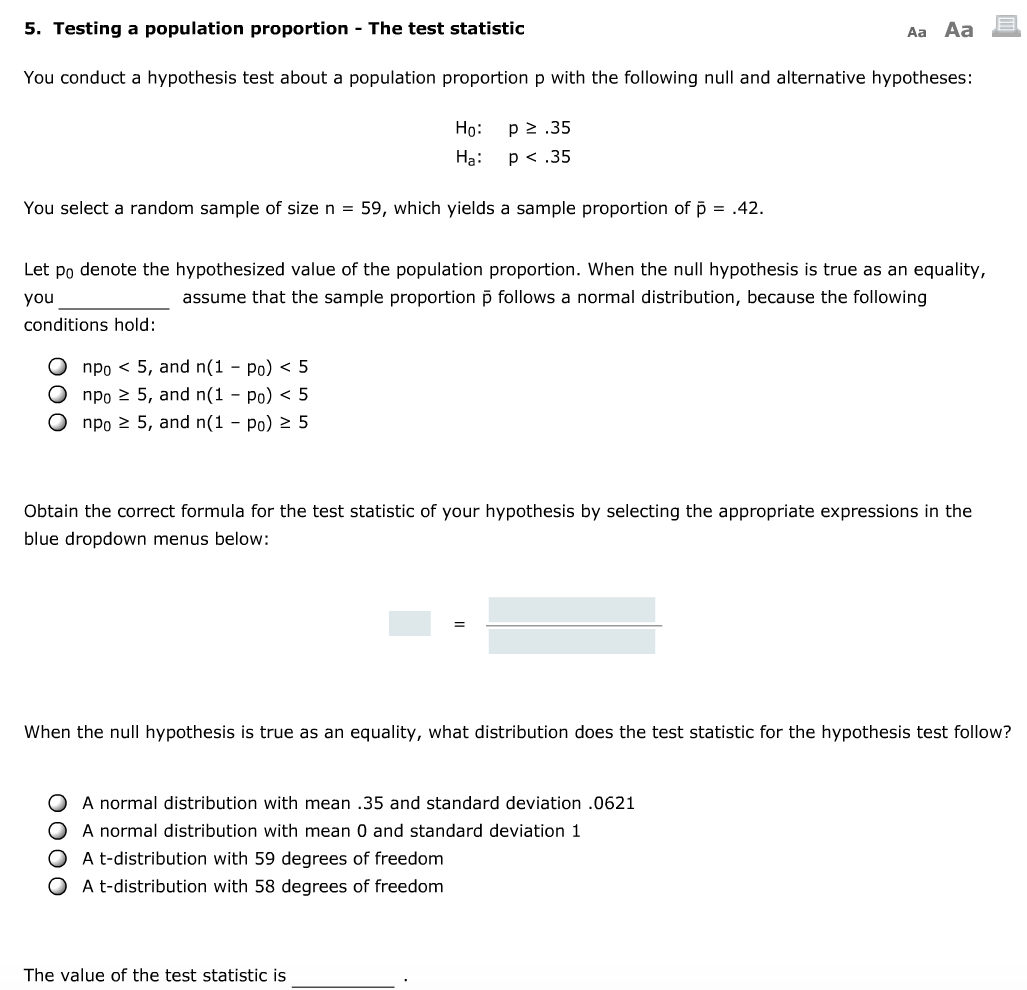

That leaves (N-2) DF for estimating the uncertainty. We need to estimate the slope and the intercept, so that's 1 DF each, or 2 DF total. In a simple linear regression setting, we have N independent observations, and each observation has two values in an (x,y) pair. Note that ANOVA requires the assumption that all the groups have equal variance, such that we use all the remaining degrees of freedom to estimate that collective standard deviation. That leaves (N-k) DF for estimating the standard deviation WITHIN each group. These (k-1) DF are spent on measuring the standard deviation BETWEEN the groups. Why k-1? Because the last group mean can be estimated from the other groups and the grand mean. So we need 1 DF for the grand mean, and (k-1) DF for the k group means. Let's call that N DF for simplicity.Ī One-Way ANOVA is a comparison of the group means to the grand mean (mean of ALL observations). , Nk from each of k populations, respectively. The closer the estimates are, the closer to the ideal (N1 + N2 - 2) DF we assume that we have. More commonly, we calculate a 'DF equivalent' based on how close the two variance estimates are. If we don't have a computer on hand, we can rely on the worst-case scenario, which is that we know only as much as what we know about the smallest of the two samples, that is min(N1 - 1, N2 - 1) DF. How much we know about the standard deviation of this contrast depends on how much information we have about each of the two standard deviations. We’re still estimating a single contrast between the population means, and we need to apply a single t-distribution to the contrast. If you do not assume equal variance between the two populations, you need spend (N1 - 1) of the DF on estimating the standard deviation of population 1, and (N2 - 1) on estimating the standard deviation of population 2. If you assume that both groups have the same variance, then you can spend all (N1 + N2 - 2) DF on estimating that one ‘pooled’ variance. How that N1 + N2 - 2 is spent depends on your assumptions about the variance. The remaining N1 + N2 - 2 can be used on estimating the uncertainty. That implies that you have N1 + N2 degrees of freedom, and that you spend 2 of them estimating the 2 means. This test uses samples of size N1 and N2 from these two populations respectively. In a two sample t-test setting, you need to estimate the difference (or, more generally, a contrast), between the means of two different populations. The remaining N-1 can be 'spent' on estimating the standard deviation. Taking the mean and standard deviation from a sample of size N from a single population, we start with N DF, and 'spend' 1 of them on estimating the mean, which is necessary for calculating the standard deviation. In this article, degrees of freedom are explained through these lenses through some common hypothesis tests, with some selected topics like saturation, fractional DF, and mixed effect models at the end. You earn it by taking independent sample units, and you spend it on estimating population parameters or on information required to get compute test statistics. I personally prefer to think of DF as a kind of statistical currency. You can interpret degrees of freedom, or DF as the number of (new) pieces of information that go into a statistic. R-bloggers - blog aggregator with statistics articles generally done with R software. Kaggle Self posts with throwaway accounts will be deleted by AutoModerator Memes and image macros are not acceptable forms of content. Just because it has a statistic in it doesn't make it statistics. Please try to keep submissions on topic and of high quality. They will be swiftly removed, so don't waste your time! Please kindly post those over at: r/homeworkhelp. This is not a subreddit for homework questions. All Posts Require One of the Following Tags in the Post Title! If you do not flag your post, automoderator will delete it: Tag

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed